Goal

Understanding multiple spatial and temporal scales in brain information processing based on in-vivo experimentation, computational analysis and computational synthesis

The 6 Key Concepts of BrainScaleS (Flyer.pdf)

Concept 1: In-vivo multiscale-recording from perceptual systems and analysis of collective features

The project is focused on the interdependency between microscopic function of individual neurons, their mesoscopic interrelation and the resulting macroscopic organization. The implementation of simultaneous recordings at different scales will provide new insights into the emergence of collective cortical network behaviour. On one hand, the goal is to find cases where the dynamics of the full system can be explained on the basis of lower dimension observables suggestive of complexity reduction. On the other hand, the demonstration of modulation of microscopic properties by the instantaneous network state or the imposed statistics of the external drive would provide evidence for immergence, specific of the nested hierarchy between the various levels of integration. Such a multiscale analysis has never been done previously in the context of complexity studies and will provide essential information to understand the nested non-linear dynamical mechanisms, which lead to the genesis of the macroscopic regularity classically reported in the functional organization of cortical structures.

Concept 2: Generic principles of computation in parallel systems with local-global interaction

BrainScaleS plans to develop new mathematical concepts and tools to explain the experimental data on multi-scale dynamics and information processing of neural systems in-vivo. Three different approaches will be pursued: (1) A mean-field theory able to connect microscopic aspects with macroscopic properties of large populations of neurons. (2) A theoretical understanding of the interaction of synaptic plasticity on the micro-scale with macroscopic network states, the temporal and spatial dynamics of neuromodulators and learning behaviour in living organisms on the macroscale. (3) A study of probabilistic inference and learning in large Bayesian networks as a framework for understanding the dynamics and information flow as well as the characteristic trial-to-trial variability of neural systems in-vivo.

Concept 3: Creation and analysis of in-vivo like states in synthesized cortical networks

Two categories of models will be investigated in BrainScaleS. In a first category, emphasis will be on architectures having very large size in terms of microscopic units (neurons). For the first time, models will be large enough to represent microscopic and macroscopic connectivities simultaneously. Consequently, the majority of the recurrent activity loops are closed and the origins of most of the synapses arriving in the local volume are incorporated. Thus, the origin of the rich activity structure observable in mesoscopic measures can be studied. In a second category of models we will investigate systems of more modest size, but with computationally complex units. We will constrain the neuron models to capture the nonlinear intrinsic properties of real neurons, based on experimental measurements, directly included in the models. This type of model will be studied at the mesoscopic scale using mean-field approaches, which will aim at incorporating this complexity at the level of interacting populations of neurons. These mean-field models will be scalable up to very large size, and compared to mesoscopic (VSD, LFP) and macroscopic (EEG, EMG) measurements. One of the most foundational aspects is to learn how to map these cortical processing models to novel non-von Neumann computing architectures explicitly designed to map multiscale systems.

Concept 4: A non-von Neumann Hybrid Multiscale Facility

We will build a facility for the exploration of non von-Neumann computing architectures, in particular for multiscale emulations of neural systems. The Hybrid Multiscale Facility (HMF) combines a neuromorphic computing system composed of custom designed neural circuits in microelectronics with conventional high performance numerical computers. The neuromorphic system is a physical model of neural microcircuits featuring low energy consumption per neural event, fault tolerance, scalability and the capability to learn. Networks can be assembled from 1.6 million neurons and 0.4 billion dynamic synapses with user configurable parameters and network architectures. The merging of the two computational concepts into a hybrid system provides a new experimental platform suited to bridge temporal scales from milliseconds to years and at the same time to study spatial scales from the single cell level to functional brain areas in a single experiment at speeds far exceeding biological real-time. By virtue of the numerical computing infrastructure as an integral part of the HMF, the system will provide virtual environments and generate sensory inputs as well as motor feedback in order to realise multiscale closed-loop experiments addressing cognitive tasks. The HMF development work will be complemented by a close collaboration with the Blue Brain Project for circuit development and the JUGENE petascale computing facility for brain-scale simulation studies.

Concept 5: Implemementation and evaluation of closed loop and open loop perceptual demos

A set of 3 functional demonstration activities will link the multiscale studies carried out using biological sensory systems with functionally and architecturally equivalent synthetic systems and make quantitative statements on the validity of theories bridging multiple scales.

In demonstrator 1 we implement and test detailed, layered and brain-scale models of two cortical sensory processing modalities (visual and haptic) to simulate their neuronal dynamics.

Demonstrator 2 will extend these models to implement integrated closed loop network-of-networks mimicking a distributed hierarchy of sensory, decision and motor cortical areas, linking perception to action during operant conditioning tasks. The implementation should result in the reward-driven self-organisation of decision making units with a differential gradient across the cortical area hierarchy.

In demonstrator 3 new concepts for generic neural based computation are demonstrated. Network implementations based on probabilistic inference models are used to dissect out the dynamics of information flow and decision making in the brain. The established formal mathematical connection between systems of partial-differential-equations and neural networks is used to study the internal representation of physical laws in learning networks acting on non-biological data.

Concept 6: Exploration of non-von Neumann computing outside the realm of brain-science

We plan to apply multi-scale information processing in the brain to computational tasks outside the realm of brain science. We will test a new method for probabilistic inference in large Bayesian networks to networks of spiking neurons and implement this approach in the HMF. We apply the resulting configuration to standard benchmark data from machine learning, data mining, artificial intelligence and other application domains. In addition test a new method for implementing learning in Bayesian networks through spike-timing-dependent plasticity (STDP) in the HMF. There are good chances that this application will demonstrate a clear functional advantage of the inherent STDP-capability of this new hardware. We will also study to which extent generic optimization problems or partial differential equations (PDEs) can be reformulated as networks of interacting artificial spiking neurons. We plan to illustrate this concept by solving a known class of PDEs using spiking neural networks, both in software, and implemented on the HMF. This approach could have important impacts in areas such as weather forecast, fluid dynamics, electromagnetism and many others. The possibility of using spiking neurons to solve PDEs, and more generally spiking neuron hardware as a tool to solve general classes of problems and equations, is a very promising direction for future ICT research.

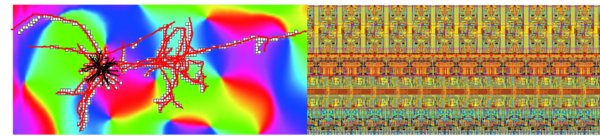

Left: axonal boutons and mesoscopic orientation (Kisvárday) Right: Electronic neural circuit (Heidelberg)

Partners

BrainScaleS are 18 groups from 15 partner institutions in 10 European countries (5 partners joined the project end of 2011)

- Koninklijke Nederlandse Akademie van Wetenschappen, Amsterdam, The Netherlands

- Universitetet For Miljo Og Biovitenskap, As, Norway

- Universitat Pompeu Fabra, Barcelona, Spain

- The Chancellor, Masters and Scholars of the University of Cambridge, Cambridge, UK

- Debreceni Egyetem, Debrecen, Hungary

- Technische Universität Dresden, Dresden, Germany

- Centre National de la Recherche Scientifique UNIC (Gif-sur-Yvette), INCM and ISM (Marseille), France

- Technische Universität Graz, Graz, Austria

- Ruprecht-Karls-Universität Heidelberg, Heidelberg, Germany

- Forschungszentrum Jülich GmbH, Jülich, Germany

- École Polytechnique Fédérale de Lausanne LCN and the Blue Brain Project, Lausanne, Switzerland

- The University Of Manchester, Manchester, UK

- Institut National de Recherche en Informatique et en Automatique, Sophia Antipolis, France

- Kungliga Tekniska Högskolan, Stockholm, Sweden

- Universität Zürich, Zürich, Switzerland

Funding

|

BrainScaleS is funded with 9.2 million Euro (8.5 million from the initial project, 0.7 million from the extension) for 4 years in the Future Emerging Technologies (FET) programme as part of EU Seventh Framework Programme (FP7)

- Project Start: January 1st, 2011

- Project Number 269921

- Project extension by project number 287701 end 2011